Data Labeling, yeah, In the rapidly evolving world of machine learning (ML) and artificial intelligence (AI), data labeling has emerged as a pivotal step in model development. Data labeling, the process of annotating raw data for supervised learning tasks, is essential for training AI systems to recognize patterns, classify data, and make predictions. However, as the demand for larger, more accurate datasets grows, traditional methods of data labeling are proving to be time-consuming, costly, and prone to human error. As a result, there has been a growing need for advanced data labeling techniques that can streamline the process while enhancing quality and efficiency.

One of the most promising advancements in this field is the emergence of hybrid approaches that combine human expertise with machine learning automation. These methods aim to balance the precision of human annotators with the speed and scalability of AI algorithms. Additionally, the advent of large language models (LLMs) has revolutionized the landscape of data labeling by enabling machines to perform tasks that were previously reserved for human annotators. In this blog, we will explore how hybrid approaches and LLMs are transforming the data labeling process and the implications they have for industries reliant on AI and machine learning.

What Are Hybrid Approaches in Data Labeling?

Definition and Key Concepts

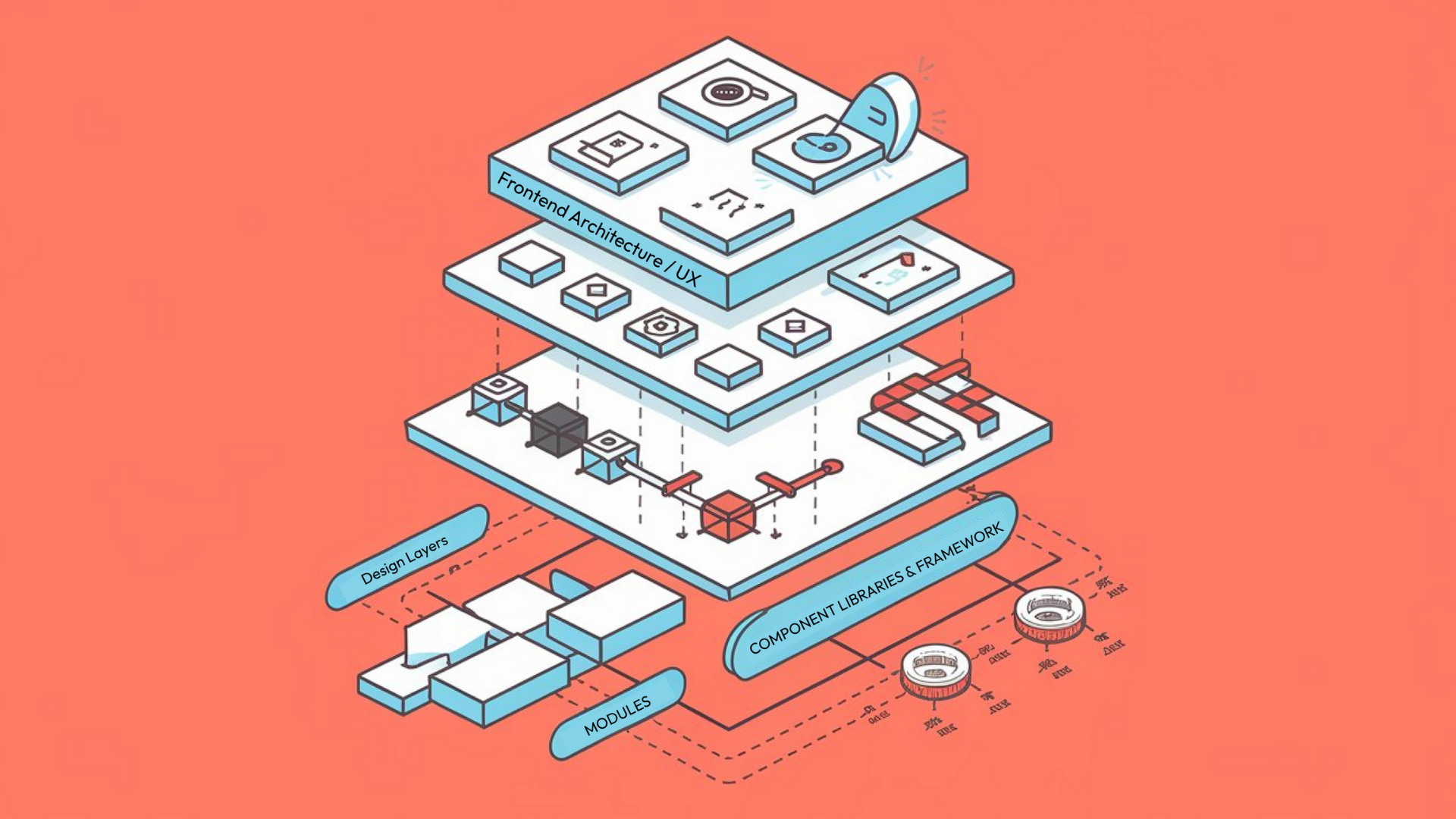

The hybrid approach to data labeling integrates the strengths of both human annotators and AI algorithms, leveraging the speed and scalability of machines while maintaining the precision and nuanced understanding of humans. The idea is simple: use AI tools to handle repetitive, large-scale tasks, while human experts step in for complex, ambiguous, or subjective labeling tasks that require deep understanding or context.

Active Learning: Enhancing Labeling Efficiency

One popular hybrid model is active learning. In active learning, an AI model is trained on a small, labeled dataset and then tasked with selecting the most uncertain or difficult data points for human labeling. The model uses this feedback to improve its predictions, reducing the overall need for human involvement in labeling while ensuring the dataset remains high-quality. This technique is particularly useful for domains like medical imaging or natural language processing, where the volume of data is immense but the annotation process is time-consuming and requires expert knowledge.

Semi-Supervised Learning: Balancing Labeled and Unlabeled Data

Another hybrid technique is semi-supervised learning, where the model is first trained on a small amount of labeled data and then uses unlabeled data to further refine its predictions. In this setup, human labels are only needed for a small subset of the data, which can dramatically reduce the cost and time of labeling large datasets.

The hybrid approach is an ideal solution for industries that need to scale their data labeling efforts but want to ensure accuracy and relevance in their models. The combination of machine efficiency and human expertise makes it a powerful tool in fields ranging from healthcare to autonomous vehicles, where high-quality labeled data is crucial for success.

The Role of Large Language Models (LLMs) in Data Labeling

What Are LLMs and How Do They Work?

Large language models (LLMs), like OpenAI’s GPT series or Google’s PaLM, have become indispensable in the realm of natural language processing (NLP) and, more recently, in data labeling. These models have been trained on vast amounts of text data, enabling them to generate human-like text, comprehend context, and understand intricate linguistic patterns. As such, they are being leveraged to assist in labeling data for a range of NLP tasks, such as sentiment analysis, named entity recognition, and text classification.

Automating Data Labeling with LLMs

One of the key advantages of LLMs in data labeling is their ability to process and understand natural language at scale. LLMs can automatically generate labels for large text corpora, significantly reducing the need for manual annotation. For example, an LLM can be tasked with labeling a collection of news articles, categorizing them into topics like politics, sports, or entertainment, based on the text’s content. This can save considerable time and effort compared to traditional human labeling.

Using LLMs for Synthetic Data Generation

In addition to labeling existing datasets, LLMs can also be used to generate synthetic data, which can be used to augment training sets. This is particularly useful in cases where labeled data is scarce or expensive to obtain. For instance, in domains like rare disease diagnosis or legal research, where data may be limited, LLMs can generate realistic, contextually appropriate examples to help train models in the absence of large annotated datasets.

The Challenges of Using LLMs for Data Labeling

However, the use of LLMs for data labeling also presents challenges. One of the main concerns is the accuracy of the labels produced by these models. While LLMs have made impressive strides in understanding language, they are not infallible. They can sometimes misinterpret context, resulting in incorrect labels. This is where hybrid approaches come into play, as human oversight can ensure that the model’s labels align with the true meaning of the data.

Despite these challenges, the integration of LLMs into the data labeling process is revolutionizing the field, making it faster, more scalable, and cost-effective. As LLMs continue to improve, they are likely to play an even greater role in automating data labeling across a variety of industries.

Combining Hybrid Approaches with LLMs: The Next Step in Data Labeling

Enhancing Labeling Accuracy and Efficiency

The combination of hybrid approaches and LLMs represents the next frontier in data labeling. By combining the strengths of both techniques, organizations can achieve a high level of accuracy while optimizing costs and resources.

In this combined approach, LLMs can be used for initial labeling, followed by human annotators who provide feedback on the model’s output. This iterative process ensures that the labeled data is accurate and consistent, with human oversight acting as a safety net for the limitations of machine-generated labels. For example, LLMs could be employed to label large amounts of text data, with human experts verifying the labels and refining them as needed.

Benefits of Combining Hybrid and LLM Approaches

This method not only improves efficiency but also enhances the quality of the labeled data. LLMs are capable of processing and labeling vast datasets in a fraction of the time it would take human annotators, allowing for rapid scaling of data labeling projects. At the same time, human annotators ensure that the labels are contextually accurate, preventing the model from making errors in complex or nuanced scenarios.

In industries where accuracy is paramount, such as in healthcare or finance, the hybrid model ensures that the data used to train AI systems is of the highest quality. By using LLMs to automate the repetitive and time-consuming aspects of data labeling, organizations can focus their human resources on the more complex tasks that require subject matter expertise, thus striking a balance between efficiency and precision.

The Future of Data Labeling: Challenges and Opportunities

The Growing Role of AI in Data Labeling

As machine learning and AI continue to evolve, so too will the methods and tools used for data labeling. The combination of hybrid approaches and LLMs represents just the beginning of a new era in data annotation, with exciting potential for further innovation.

However, challenges remain. One of the primary concerns is the ongoing need for human expertise in certain domains. While LLMs and AI models can automate much of the data labeling process, they still struggle with tasks that require deep contextual understanding, empathy, or domain-specific knowledge. This means that, even with the advancements in machine learning, human annotators will remain a crucial part of the data labeling ecosystem, especially for tasks involving complex or subjective data.

Ensuring Fairness and Reducing Bias in Labeled Data

Additionally, there is the challenge of ensuring that data labeled by AI models is unbiased and representative. AI models are trained on existing datasets, which can sometimes reflect societal biases. If these biases are not carefully monitored, they can be perpetuated in the labeled data, leading to inaccurate or unfair predictions. Addressing these issues will require continuous efforts to improve the fairness and transparency of AI systems.

The Future of Hybrid Data Labeling Models

Despite these challenges, the future of data labeling looks promising. With further advancements in LLMs, hybrid models, and other emerging technologies, data labeling will become faster, more efficient, and more scalable, enabling organizations to create high-quality datasets for training AI models across various industries.

Final Thoughts: The Impact of Hybrid and LLM Approaches on Data Labeling

Advanced data labeling methods, particularly the combination of hybrid approaches and large language models, are transforming the landscape of AI and machine learning. By integrating the precision of human expertise with the speed of machine automation, these methods enable the creation of high-quality labeled datasets at scale. Hybrid approaches, such as active and semi-supervised learning, are helping to reduce costs and human labor, while LLMs are accelerating the labeling process by automating tasks that were once manual.

While challenges remain, particularly in ensuring accuracy and fairness, the future of data labeling is bright. As technology continues to evolve, the integration of hybrid models and LLMs will only improve, making the data labeling process more efficient, scalable, and accessible. For industries that rely on machine learning and AI, this is a game-changer, opening up new possibilities for creating smarter, more capable systems that can tackle complex problems with unprecedented speed and accuracy.

Jahanzaib is a Content Contributor at Technado, specializing in cybersecurity. With expertise in identifying vulnerabilities and developing robust solutions, he delivers valuable insights into securing the digital landscape.